27 coaches online • Server time: 07:51

* * * Did you know? The best blocker is Taku the Second with 551 casualties.

| Recent Forum Topics |

2017-03-25 16:18:39

16 votes, rating 6

16 votes, rating 6

FUMBBL Network Architecture

With the latest upgrade of Deeproot, the FUMBBL network changed somewhat. Some of you may be interested in this type of thing, so I figured I'd do a writeup of how it's all set up now, after the change.

To start the explanation, this is a very high level diagram of the basic physical architecture:

In order to be able to get a bit into the details of this, there are a couple of concepts that I need to explain first: Virtualisation and VLANs.

Virtualisation

Virtualisation is a technology where one computer has specialised software installed that can run multiple operating system (OS) installations at the same time. In virtualisation contexts, this machine is called a "hypervisor" or "host machine". The systems running "inside" this hypervisor are called "guest machines" or simply "guests". The reason this is done is to make it easier to manage the individual guest machines. A typical server will have all sorts of configuration and applications installed on it, and having this installed directly on a server (called "bare metal") makes it quite time-consuming to move the installation to a new machine should there be a need to do so (for example, if a machine has a hardware failure).

In a virtualised environment, the hypervisor provides the guests with a "virtual" set of hardware that acts the same. The hypervisor itself is relatively simple to install should there be a need to do that. This means that in order to move a virtual guest machine to a new host is more or less a matter of copying a couple of files.

Another benefit is that it's possible to share resources should the various guests not necessarily be running at 100% resource usage at all times (which is an incredibly rare event). There are additional benefits as well (relating to backups and something called "snapshots"), which I will not go into any further at this point as it's a bit out of scope for this blog. It'll be long enough as it is :)

There is a cost of virtualisation of course. The added layer between the guest and the hardware generates a bit of overhead (i.e. slows things down). This overhead ends up in a 10-15% performance loss. You could potentially have situations where guests are generating enough load on the shared resources that they all slow down. While CPU, memory and disk can be managed pretty easily, the machines do share the same basic platform (PCI Express and memory bus mainly). In certain workloads, this can cause congestion (and it the reason I'm not planning to migrate the database server for FUMBBL to the new platform).

VLANs

This technology is related to networks which in theory could be a huge blog post on its own. I will go through some of the basics very quickly, and hope it's enough for those of you who don't know this already to follow.

Normal computer networks (like the ones all of us use to access this site) can be described using what's called the OSI model (Open Systems Interconnection Model; practically always referred to with the abbreviation). This model describes communication using a 7 level structure.

At the bottom you find layer 1, the physical layer, which is a specification of electricity flowing over the wires. While this has its interesting aspects, I'm skipping this to conserve space.

Above that you have layer 2, the "data link layer". This is where protocols like "ethernet" reside. For most people, this layer is rarely of interest but does contain things some people do run into at times. The physical address of networking equipment is defined on this layer (e.g. the "MAC Address"). This is technically where the concept of a VLAN is defined, but it's easier to describe it in the context of layer 3.

Layer 3, the "network layer", is where most people will start to recognise concepts. This is where the IP protocol hangs out. The IP address is a logical address for a network interface (as opposed to the physical address defined in layer 2, which normally never changes). We'll get back to the IP concept in a bit.

Layer 4 is the "transport layer". Common protocols here are TCP and UDP. This layer adds the concept of "connections" to the mix. This is too generic for VLANs, so I'll leave the details out.

Layer 5 to 7 are similarly too high level to be relevant in this scope. They're called the "session layer", the "Presentation layer" and the "application layer" respectively. If you are interested in the details, there's a reasonable Wikipedia Article about them all. Layer 7 is where you have protocols such as HTTP (SSL, or the S part in HTTPS, sits in layer 6), SSH, Telnet, DHCP, etc etc.

So, let's go back to layer 3 and IP numbers. A typical IP(v4, leaving v6 out of this entirely) number is usually defined as a sequence of four 8 bit numbers (0-255) such as 192.168.1.10. This number describes the logical address of a network card. In order for the Internet to function, these numbers (roughly 4.3 billion of them) are split into two parts, called the "network address" and the "host identifier". One way of splitting this would be to simply cut the full 32 bits (4x8) into two groups of 16 bits each and call it a day, the way it works is a bit more generalised in order to allow routing (the process of sending network traffic to the correct destination) to work at different levels depending on where the routers are located. To do this, typical network configurations use two numbers: An IP address (192.168.1.10 for example) and a subnet mask (typically 255.255.255.0). This "net mask" tells the machines how large a particular network is. The 255.255.255.0 mask means that the network is the first 24 bits (192.168.1.*) and the host identifier is the last 8 bits (*.*.*.10). I won't go into further detail here, but you can look up CIDR (Classless Inter-Domain Routing) for more details. Instead of giving two full "quads", networks are often defined with a shorthand: 192.168.1.0/24, where /24 means the first 24 bits are the network address. All this is really defined in binary numbers, where it makes a bit more sense than the actual numbers you traditionally see.

What about VLANs? We're almost there. Normally, you connect computers together using a device called a switch (I'm assuming a wired network). A switch basically keeps track of the hardware addresses (Layer 2, MAC addresses) of devices connected to each port and sends traffic that comes in on one of the ports out to the port where the destination is located. Mind you, there are subtleties here, such as broadcast messages, that I'm skipping for now. Generally speaking, you have all devices connected to a switch configured with the same network address. While it's possible to run multiple networks over the same set of wires, this is not normally advisable due to the ability for this traffic to be eavesdropped on (simply by changing the network card IP address, or switching it to what's called "promiscuous mode", where it receives all traffic sent on the line).

To solve this issue in a traditional setting (before VLANs), you'd install multiple network interfaces to the machine, and configure them separately for the different networks. You'd also have separate switches for each network you wanted access to.

While VLANs were originally designed to do load balancing of networking equipment, it's not often used for that purpose in modern systems. Instead, they're used to reduce the amount of networking infrastructure (switches and cables) that are needed to set up an enterprise network. The idea is that the switch is configured to "tag" traffic that comes in on a port with a VLAN (often visualised as a colour). This incoming traffic can then only be forwarded to a port which is also set up with the same VLAN. For example, let's say you have a 24 port switch. You configure the first 8 to be "Red", the next 8 to be "Green" and the last 8 to be "Blue" (in reality, these are assigned numerical VLAN IDs). In that configuration, the single 24 port switch will act as three separate network switches which will not allow any "cross-talk" between the different ports.

Now you're asking "ok, so what? What's the use of that, and how does that save on the amount of cable that has to be routed?". Let's say you have two rooms with a few computers in each room. These all have different needs and some computers in each room are supposed to be in each of the three different networks. Without VLANs, each room would have three separate switches (obviously, in normal enterprise environments, all this equipment is hidden in a networking closet somewhere else), and each of these switches would have a cable running to the switch for the other room (or more traditionally a cable from each switch going to the gateway/firewall machine of the network). These two rooms could in theory be very far apart, meaning it'd be a lot of cable between these two rooms.

With VLANs, not only can you assign a port with a specific VLAN ID (or colour), a port can have multiple VLANs at the same time. This is sometimes called "trunking" (the terminology varies depending on the manufacturer of the hardware). What this does is that the switch can send traffic that's bound for a multiple VLANs out the same port. At the receiving end, a VLAN aware switch takes each piece of traffic and looks which colour it is in, and only routes the traffic to the correspondingly coloured ports. This means that in our hypothetical setup, only a single cable needs to be run between the two rooms and that cable will carry all three colours of traffic.

Probably roughly as clear as mud, but you can think of VLANs as virtual network cards and cables. They separate traffic much like having more actual hardware would.

Ok, now we can go back to the FUMBBL specific setup. Deeproot, the new server purchased with the money gathered during the february 2017 fund raiser, is the machine that runs the Hypervisor (Microsoft Hyper-V Server 2016 to be precise). There are currently two guests running on the machine: Icepelt (running pfSense, which is a firewall software based on FreeBSD) and Puggy (running Postfix, a mail server on top of Ubuntu Server; a linux distribution).

Hyper-V allows the setup of virtual switches which in turn can be connected to the individual guests' virtual interfaces and/or the physical network interfaces of the host machine. The FUMBBL setup has a number of different networks defined:

WAN - This isn't exactly a network from the FUMBBL perspective. It's simply the connection to the Internet. It's a DHCP configured IP address given to be my my ISP.

Ethernet LAN - Strictly a wired network used for all the "normal" desktop machines and consoles that run in my home. This is a /24 network which is tagged in the switches.

WiFi LAN - The wireless network I have in my home. Another /24, also tagged

PUB - A "public" traffic network. This is defined as traffic that goes between the FUMBBL servers and the Internet. Again, another tagged /24.

BCK - A "backend" traffic network. This is traffic that goes between the different servers (database queries, traffic between the FFB server and the website, etc). As with the others, this is also a tagged /24.

MGM - A "management" traffic network. This is used by me to do maintenance tasks, such as logging into the servers over SSH and copying files between machines. This is another /24 and runs tagged within the server network, and untagged outside (pretty much strictly to LAN Switch 1 in the diagram above) because the WiFi Access Point I use can not be managed on a tagged network. I may end up unifying this somewhat at some point later as it's not as clean as it should be. Possibly by tagging it all the way and configuring the port that the Access Point is connected to configured to strip that particular vlan id when it's sent out.

Within the server rack that houses the servers, I'm mostly using separate physical equipment for each of these networks. Servers have multiple network cards to correspond with each of these networks. The Ethernet and WiFi LAN networks are running tagged though, as Deeproot simply doesn't have enough network cards available to use (5 of them in the machine). The cable running from the server switch to LAN Switch 1 is a trunked connection that carries both the LAN networks and the management network. Similarly, the cable between LAN Switch 1 and LAN Switch 2 is trunked to carry Ethernet LAN and MGM. My own computer is connected to LAN Switch 2, where I need access to the management network.

In detail, the network equipment I use is (all supporting gigabit speeds):

Server Switch: NetGear GS724T, a 24 port "Smart Switch"

LAN Switch 1: Ubiquiti UniFi Switch 8 POE-150W, an 8 port "fully managed" switch with POE (power over ethernet) support.

LAN Switch 2: NetGear GS108Ev3, an 8 port smart switch.

Access Point: Ubiquiti UniFi AP-AC-Pro, Power over Ethernet enabled access point (e.g. Wifi device).

Management: Ubiquiti UniFi Cloud Key, a small device which allows easier access to management of the UniFi devices.

There are also a couple of "dumb" NetGear switches in the network, which mainly extend the ethernet LAN to various devices around the "FUMBBL HQ" :)

I am considering changing some/all of the switches from NetGear to Ubiquiti. The administration and configuration system is vastly better than what NetGear offers. This would give me better control over the network as a whole. I may also add a boundary firewall outside the pfSense setup at some point.

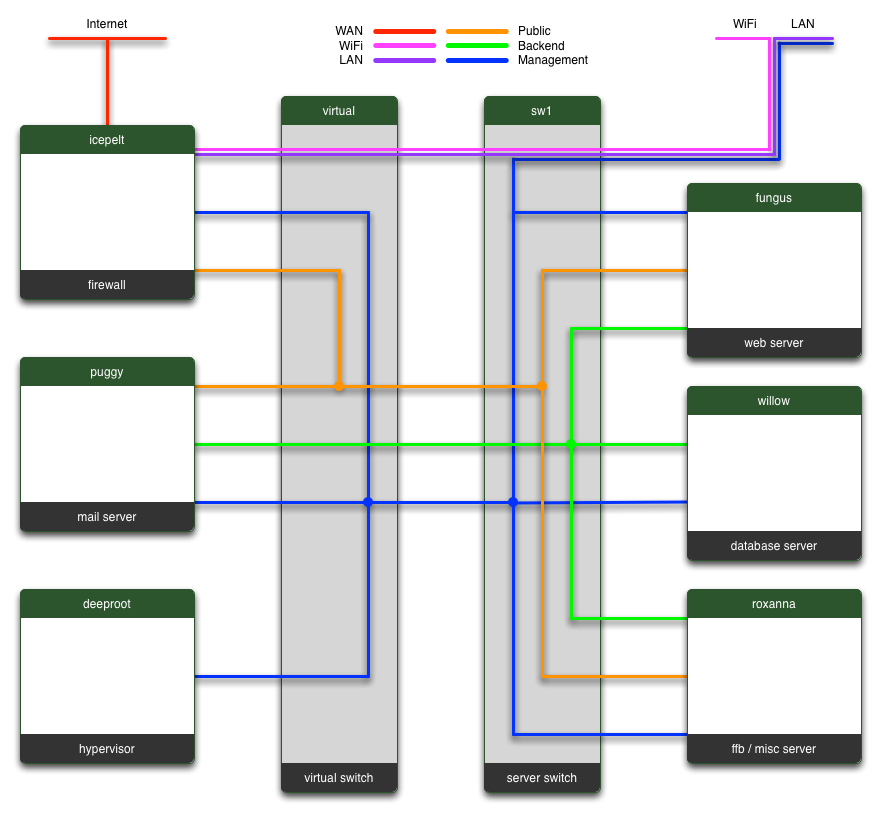

Either way, in order to give you a clear view of the different networks, I'll leave you with a diagram I've put together which shows which servers are connected to which network(s). For privacy reasons, this leaves out details on the LAN (and a few of the devices connected there. The image is intended for a page on the site that I use to view traffic (based on SNMP, another topic entirely) between the different machines.

If you read this far, feel free to write a comment below if you found this interesting. :)

To start the explanation, this is a very high level diagram of the basic physical architecture:

In order to be able to get a bit into the details of this, there are a couple of concepts that I need to explain first: Virtualisation and VLANs.

Virtualisation

Virtualisation is a technology where one computer has specialised software installed that can run multiple operating system (OS) installations at the same time. In virtualisation contexts, this machine is called a "hypervisor" or "host machine". The systems running "inside" this hypervisor are called "guest machines" or simply "guests". The reason this is done is to make it easier to manage the individual guest machines. A typical server will have all sorts of configuration and applications installed on it, and having this installed directly on a server (called "bare metal") makes it quite time-consuming to move the installation to a new machine should there be a need to do so (for example, if a machine has a hardware failure).

In a virtualised environment, the hypervisor provides the guests with a "virtual" set of hardware that acts the same. The hypervisor itself is relatively simple to install should there be a need to do that. This means that in order to move a virtual guest machine to a new host is more or less a matter of copying a couple of files.

Another benefit is that it's possible to share resources should the various guests not necessarily be running at 100% resource usage at all times (which is an incredibly rare event). There are additional benefits as well (relating to backups and something called "snapshots"), which I will not go into any further at this point as it's a bit out of scope for this blog. It'll be long enough as it is :)

There is a cost of virtualisation of course. The added layer between the guest and the hardware generates a bit of overhead (i.e. slows things down). This overhead ends up in a 10-15% performance loss. You could potentially have situations where guests are generating enough load on the shared resources that they all slow down. While CPU, memory and disk can be managed pretty easily, the machines do share the same basic platform (PCI Express and memory bus mainly). In certain workloads, this can cause congestion (and it the reason I'm not planning to migrate the database server for FUMBBL to the new platform).

VLANs

This technology is related to networks which in theory could be a huge blog post on its own. I will go through some of the basics very quickly, and hope it's enough for those of you who don't know this already to follow.

Normal computer networks (like the ones all of us use to access this site) can be described using what's called the OSI model (Open Systems Interconnection Model; practically always referred to with the abbreviation). This model describes communication using a 7 level structure.

At the bottom you find layer 1, the physical layer, which is a specification of electricity flowing over the wires. While this has its interesting aspects, I'm skipping this to conserve space.

Above that you have layer 2, the "data link layer". This is where protocols like "ethernet" reside. For most people, this layer is rarely of interest but does contain things some people do run into at times. The physical address of networking equipment is defined on this layer (e.g. the "MAC Address"). This is technically where the concept of a VLAN is defined, but it's easier to describe it in the context of layer 3.

Layer 3, the "network layer", is where most people will start to recognise concepts. This is where the IP protocol hangs out. The IP address is a logical address for a network interface (as opposed to the physical address defined in layer 2, which normally never changes). We'll get back to the IP concept in a bit.

Layer 4 is the "transport layer". Common protocols here are TCP and UDP. This layer adds the concept of "connections" to the mix. This is too generic for VLANs, so I'll leave the details out.

Layer 5 to 7 are similarly too high level to be relevant in this scope. They're called the "session layer", the "Presentation layer" and the "application layer" respectively. If you are interested in the details, there's a reasonable Wikipedia Article about them all. Layer 7 is where you have protocols such as HTTP (SSL, or the S part in HTTPS, sits in layer 6), SSH, Telnet, DHCP, etc etc.

So, let's go back to layer 3 and IP numbers. A typical IP(v4, leaving v6 out of this entirely) number is usually defined as a sequence of four 8 bit numbers (0-255) such as 192.168.1.10. This number describes the logical address of a network card. In order for the Internet to function, these numbers (roughly 4.3 billion of them) are split into two parts, called the "network address" and the "host identifier". One way of splitting this would be to simply cut the full 32 bits (4x8) into two groups of 16 bits each and call it a day, the way it works is a bit more generalised in order to allow routing (the process of sending network traffic to the correct destination) to work at different levels depending on where the routers are located. To do this, typical network configurations use two numbers: An IP address (192.168.1.10 for example) and a subnet mask (typically 255.255.255.0). This "net mask" tells the machines how large a particular network is. The 255.255.255.0 mask means that the network is the first 24 bits (192.168.1.*) and the host identifier is the last 8 bits (*.*.*.10). I won't go into further detail here, but you can look up CIDR (Classless Inter-Domain Routing) for more details. Instead of giving two full "quads", networks are often defined with a shorthand: 192.168.1.0/24, where /24 means the first 24 bits are the network address. All this is really defined in binary numbers, where it makes a bit more sense than the actual numbers you traditionally see.

What about VLANs? We're almost there. Normally, you connect computers together using a device called a switch (I'm assuming a wired network). A switch basically keeps track of the hardware addresses (Layer 2, MAC addresses) of devices connected to each port and sends traffic that comes in on one of the ports out to the port where the destination is located. Mind you, there are subtleties here, such as broadcast messages, that I'm skipping for now. Generally speaking, you have all devices connected to a switch configured with the same network address. While it's possible to run multiple networks over the same set of wires, this is not normally advisable due to the ability for this traffic to be eavesdropped on (simply by changing the network card IP address, or switching it to what's called "promiscuous mode", where it receives all traffic sent on the line).

To solve this issue in a traditional setting (before VLANs), you'd install multiple network interfaces to the machine, and configure them separately for the different networks. You'd also have separate switches for each network you wanted access to.

While VLANs were originally designed to do load balancing of networking equipment, it's not often used for that purpose in modern systems. Instead, they're used to reduce the amount of networking infrastructure (switches and cables) that are needed to set up an enterprise network. The idea is that the switch is configured to "tag" traffic that comes in on a port with a VLAN (often visualised as a colour). This incoming traffic can then only be forwarded to a port which is also set up with the same VLAN. For example, let's say you have a 24 port switch. You configure the first 8 to be "Red", the next 8 to be "Green" and the last 8 to be "Blue" (in reality, these are assigned numerical VLAN IDs). In that configuration, the single 24 port switch will act as three separate network switches which will not allow any "cross-talk" between the different ports.

Now you're asking "ok, so what? What's the use of that, and how does that save on the amount of cable that has to be routed?". Let's say you have two rooms with a few computers in each room. These all have different needs and some computers in each room are supposed to be in each of the three different networks. Without VLANs, each room would have three separate switches (obviously, in normal enterprise environments, all this equipment is hidden in a networking closet somewhere else), and each of these switches would have a cable running to the switch for the other room (or more traditionally a cable from each switch going to the gateway/firewall machine of the network). These two rooms could in theory be very far apart, meaning it'd be a lot of cable between these two rooms.

With VLANs, not only can you assign a port with a specific VLAN ID (or colour), a port can have multiple VLANs at the same time. This is sometimes called "trunking" (the terminology varies depending on the manufacturer of the hardware). What this does is that the switch can send traffic that's bound for a multiple VLANs out the same port. At the receiving end, a VLAN aware switch takes each piece of traffic and looks which colour it is in, and only routes the traffic to the correspondingly coloured ports. This means that in our hypothetical setup, only a single cable needs to be run between the two rooms and that cable will carry all three colours of traffic.

Probably roughly as clear as mud, but you can think of VLANs as virtual network cards and cables. They separate traffic much like having more actual hardware would.

Ok, now we can go back to the FUMBBL specific setup. Deeproot, the new server purchased with the money gathered during the february 2017 fund raiser, is the machine that runs the Hypervisor (Microsoft Hyper-V Server 2016 to be precise). There are currently two guests running on the machine: Icepelt (running pfSense, which is a firewall software based on FreeBSD) and Puggy (running Postfix, a mail server on top of Ubuntu Server; a linux distribution).

Hyper-V allows the setup of virtual switches which in turn can be connected to the individual guests' virtual interfaces and/or the physical network interfaces of the host machine. The FUMBBL setup has a number of different networks defined:

WAN - This isn't exactly a network from the FUMBBL perspective. It's simply the connection to the Internet. It's a DHCP configured IP address given to be my my ISP.

Ethernet LAN - Strictly a wired network used for all the "normal" desktop machines and consoles that run in my home. This is a /24 network which is tagged in the switches.

WiFi LAN - The wireless network I have in my home. Another /24, also tagged

PUB - A "public" traffic network. This is defined as traffic that goes between the FUMBBL servers and the Internet. Again, another tagged /24.

BCK - A "backend" traffic network. This is traffic that goes between the different servers (database queries, traffic between the FFB server and the website, etc). As with the others, this is also a tagged /24.

MGM - A "management" traffic network. This is used by me to do maintenance tasks, such as logging into the servers over SSH and copying files between machines. This is another /24 and runs tagged within the server network, and untagged outside (pretty much strictly to LAN Switch 1 in the diagram above) because the WiFi Access Point I use can not be managed on a tagged network. I may end up unifying this somewhat at some point later as it's not as clean as it should be. Possibly by tagging it all the way and configuring the port that the Access Point is connected to configured to strip that particular vlan id when it's sent out.

Within the server rack that houses the servers, I'm mostly using separate physical equipment for each of these networks. Servers have multiple network cards to correspond with each of these networks. The Ethernet and WiFi LAN networks are running tagged though, as Deeproot simply doesn't have enough network cards available to use (5 of them in the machine). The cable running from the server switch to LAN Switch 1 is a trunked connection that carries both the LAN networks and the management network. Similarly, the cable between LAN Switch 1 and LAN Switch 2 is trunked to carry Ethernet LAN and MGM. My own computer is connected to LAN Switch 2, where I need access to the management network.

In detail, the network equipment I use is (all supporting gigabit speeds):

Server Switch: NetGear GS724T, a 24 port "Smart Switch"

LAN Switch 1: Ubiquiti UniFi Switch 8 POE-150W, an 8 port "fully managed" switch with POE (power over ethernet) support.

LAN Switch 2: NetGear GS108Ev3, an 8 port smart switch.

Access Point: Ubiquiti UniFi AP-AC-Pro, Power over Ethernet enabled access point (e.g. Wifi device).

Management: Ubiquiti UniFi Cloud Key, a small device which allows easier access to management of the UniFi devices.

There are also a couple of "dumb" NetGear switches in the network, which mainly extend the ethernet LAN to various devices around the "FUMBBL HQ" :)

I am considering changing some/all of the switches from NetGear to Ubiquiti. The administration and configuration system is vastly better than what NetGear offers. This would give me better control over the network as a whole. I may also add a boundary firewall outside the pfSense setup at some point.

Either way, in order to give you a clear view of the different networks, I'll leave you with a diagram I've put together which shows which servers are connected to which network(s). For privacy reasons, this leaves out details on the LAN (and a few of the devices connected there. The image is intended for a page on the site that I use to view traffic (based on SNMP, another topic entirely) between the different machines.

If you read this far, feel free to write a comment below if you found this interesting. :)

Comments

Posted by pythrr on 2017-03-25 18:59:59

it's like robots and things

happy you know how all this works!

happy you know how all this works!

Posted by JellyBelly on 2017-03-25 23:59:16

Very interesting and detailed post, Christer, but one question ...

What happened to Borak?! I thought he got saved? ;)

What happened to Borak?! I thought he got saved? ;)

Posted by Christer on 2017-03-26 00:37:45

Borak's safe in a warmer climate than Sweden.. :)

Posted by DrDiscoStu on 2017-03-26 01:39:48

Interesting! Though I understood about 9 words.

Posted by mike467 on 2017-03-26 10:55:45

Definitely interesting, thanks for taking the time to share this.

Posted by Prez on 2017-03-26 13:32:53

Excellent stuff - After self studying CompTIA for the last x months...I actually understood a lot of this. lol some of it has actually sank in :)

Posted by mister__joshua on 2017-03-26 17:20:21

A nice write up. I understood most of it, but wouldn't like to try and set it up myself! The ubiquiti kit is really good, used it for a couple of wifi networks. The management tools are indeed excellent.

Posted by Merrick18818 on 2017-03-26 22:39:21

You lost me at 'latest upgrade'.

Seriously though, thanks again for all the great work. :)

Seriously though, thanks again for all the great work. :)